|

If you require technical support, please go to the Support page and open a ticket.

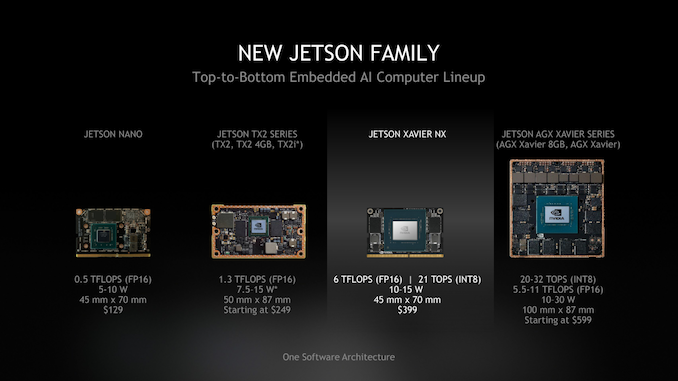

Jetson AGX Orin Developer Kit User Manual The maxed-out variant is Jetson AGX Orin 64GB, which boosts AI performance up to 275 terra operation per second (TOPS), compared to 32 TOPS offered by the previous Jetson AGX Xavier modules.The Jetson AGX Orin provides 8X the performance of Jetson AGX Xavier with the same compact form factor and compatible pinouts, integrating NVIDIA Ampere architecture GPU, Arm Cortex-A78AE CPU, next-generation deep learning and vision accelerator, high-speed interface, faster memory bandwidth, and multi-mode sensor support, for supporting multiple concurrent AI application channels. We got our hands on one and put it through its paces. With up to 275 TOPS for running the NVIDIA AI software stack, this developer kit lets you create advanced robotics and edge AI applications for manufacturing, logistics, retail, service, agriculture, smart city, healthcare, and life sciences. Nvidias new Jetson AGX Orin is the same size as the older Xavier, but packs 2-8x the AI horsepower, depending on the application. Nvidia's Jetson AGX Orin for the Edge is also up to 63 percent more energy efficient and up to 81 percent more powerful than last year thanks to numerous improvements.The NVIDIA Jetson AGX Orin Developer Kit includes a high-performance, power-efficient Jetson AGX Orin module. Nvidia's L4 is significantly faster than the predecessor T4. So we conducted a series performance testing of YOLOv7 variants models on different devices, from cloud GPUs A100 to the latest tiny powerhouse AGX Orin. The card is already available from some cloud providers and delivers 2.2 to 3.1 times the inference performance of its predecessor, the T4, in the benchmarks. New to the benchmark is Nvidia's L4 Tensor GPU, which the company recently unveiled at GTC. New L4 card up to 3 times faster than predecessor | Image: NvidiaĬompared to an A100 GPU, the H100 GPU is also significantly stronger at inferencing transformer models, such as BERT 99.9, thanks to the Transformer engine, where the H100 delivers more than four times the performance.Īs a result, the card promises to deliver big performance gains for many generative AI models, such as those that generate text, images, or 3D models. Jetson AGX Orin contains an integrated Ampere GPU that provides a total of 2048 CUDA cores and 64 Tensor cores with up to 131 Sparse TOPs of INT8 Tensor compute. Beyond the hardware, it takes great software and optimization work to get the most out of these platforms. A comparison with some accelerators that participated in MLPerf 3.0. In MLPerf Inference 2.0, NVIDIA delivered leading results across all workloads and scenarios with both data center GPUs and the newest entrant, the NVIDIA Jetson AGX Orin SoC platform built for edge devices and robotics. Nvidia is the only company to present results for all tasks in MLPerf Inference 3.0. In presenting the results, Nvidia emphasized that they see themselves as the clear leader in performance, but also as the equally important leader in the versatility of their architecture. This jump is seen in RetinaNet inference, with other models such as 99% accurate BERT running 12% faster, ResNet-50 running 13% faster, and 3D U-Net used in medical applications running 31% faster. Nvidia Hopper makes significant year-over-year gainsĪccording to Nvidia, the H100 Tensor Core GPUs in the DGX H100 systems have up to 54 percent more inference performance than last year due to software optimizations. The test is designed to more accurately reflect how data enters the AI accelerator and is output in the real world, thus revealing bottlenecks in the network. A new feature is a network environment that tests the AI performance of different systems under more realistic conditions: Data is streamed to an inference server.

And it’s backed by a million developers using the NVIDIA Jetson platform. With its JetPack SDK, Orin runs the full NVIDIA AI platform, a software stack already proven in the data center and the cloud. We chose to just run it as its own self-contained PC. Servers and devices with NVIDIA GPUs including Jetson AGX Orin were the only edge accelerators to run all six MLPerf benchmarks. Today MLPerf released new results of the MLPerf Inference 3.0 benchmark. The Jetson AGX Orin developer kit can run headless attached to a Linux PC via one of the USB-C or micro USB ports or as a standalone Linux box. The test is hosted by MLCommons and aims to transparently compare different chip architectures and system variants. NVIDIA® Jetson Orin modules give you up to 275 trillion operations per second (TOPS) and 8X the performance of the last generation for multiple concurrent AI inference. Bring your next-gen products to life with the world’s most powerful AI computers for energy-efficient autonomous machines. In the MLPerf benchmark, hardware vendors and service providers compete with their AI systems. NVIDIA Jetson AGX Orin Industrial module. New data shows performance leaps with Hopper and new hardware. Nvidia leads this year's MLPerf inference benchmark.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed